A few weeks back, Nina Stössinger asked on Twitter:

Isn’t it odd that the percent sign looks like “0/0” rather than, say, “/100” or “/00”?

This, it turns out, is a very good question. Like Nina, I had assumed that the percent sign was shaped so as to invoke the idea of a vulgar fraction, with a tiny zero aligned on either side of a solidus ( ⁄ ), or fraction slash. That said, something about those zeroes had always nagged at me. Specifically, as you divide any non-zero quantity by a smaller and smaller number the result tends ever closer to infinity (or rather, ±∞ as appropriate), until finally, when dividing by zero itself, you reach a mathematical singularity where the result cannot be computed — a numerical black hole of exotic properties and mind-bending implications. Throw in another zero as the numerator and you have a thoroughly nonsensical fraction. Though this is all terribly exciting from a philosophical point of view, it is not an especially useful situation to be in when trying to communicate the simple concept of division into hundredths. Either the ‘%’ had stumbled, blinking, from some secret garden of esoteric mathematics and into the real world, or there was more to the story. And so there was.

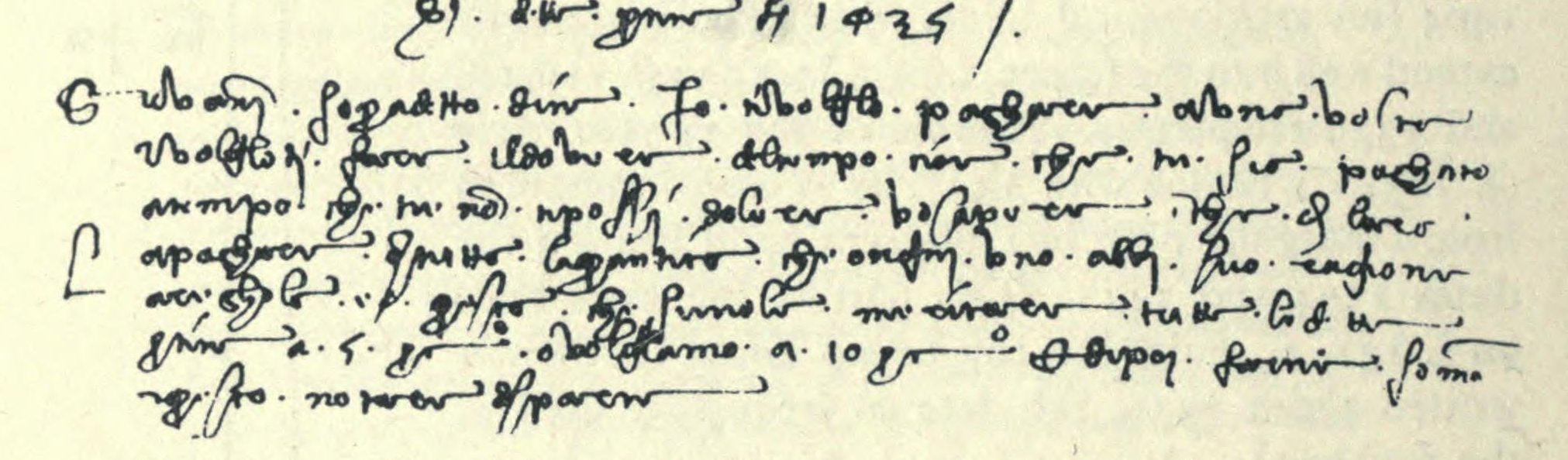

Writing in 1908, David Eugene Smith, later to be president of the Mathematical Association of America,1 reported on a peculiar find he had made in an Italian manuscript written sometime during the early part of fifteenth century. (Smith was cataloguing the mathematical holdings of one George Arthur Plimpton, a publisher and philanthropist who had amassed a huge library of ancient books.) What had caught Smith’s eye was an oddly attenuated abbreviation comprising a ‘p’, an elongated ‘c’, and a superscript ‘o’, or ‘o’, balanced upon the extended upper terminal of the ‘c’, as seen at top. From its context, Smith deduced that pco was a stand-in for the words per cento, or “per hundred”, more often abbreviated to per 100, p cento, or p 100.2 It was the first step towards a distinct percent sign — and, counterintuitively, it had precisely nothing to do with the digit zero.

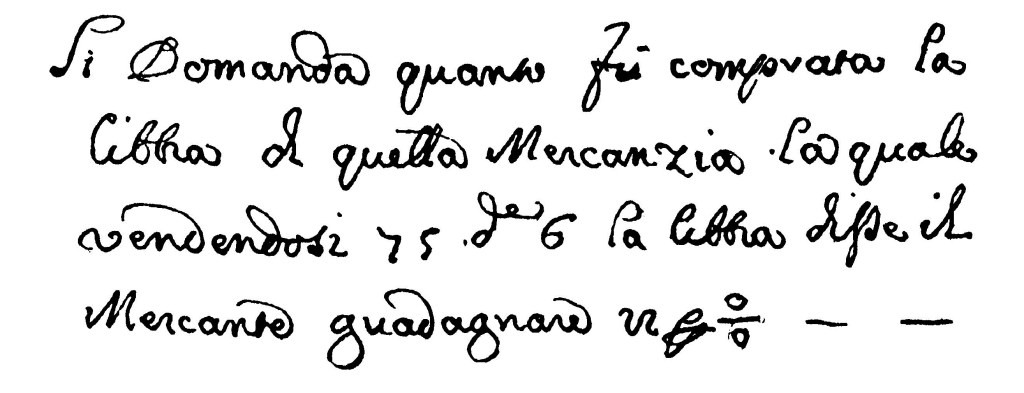

Smith picked up the trail with his weighty two-volume History of Mathematics, published in 1923,3 wherein he printed an image of the percent sign caught midway between pco and ‘%’. Taken from an Italian manuscript of 1684, as seen above, by now the word per had collapsed into the tortuous but common scribal abbreviation seen here while the ‘c’ had morphed into a closed circle surmounted by a short horizontal stroke. The imperturbable ‘o’ sat atop it. All that remained was for the vestigial per to vanish and for the horizontal stroke to assume its familiar diagonal orientation — a change that occurred sometime during the nineteenth century — and the evolution of the percent sign was complete.

Since then the ‘%’ has gone from strength to strength, and today we revel in a whole family of “per ————” signs, with ‘%’ joined by ‘‰’ (“per mille”, or per thousand) and ‘‱’ (per ten thousand). All very logical, on the face of it, and all based on a fundamental misunderstanding of how the percent sign came to be. Nina and I can comfort ourselves that we are not the first people, and likely will not be the last, to have made the same mistake.

- 1.

-

Fite, W Benjamin. “[Obituary]: David Eugene Smith”. The American Mathematical Monthly 52, no. 5 (1945): 237-238.

- 2.

-

Smith, David Eugene. Rara Arithmetica; A Catalogue of the Arithmetics Written before the Year MDCI, With Description of Those in the Library of George Arthur Plimpton, of New York. Boston: Ginn & company, 1908.

- 3.

-

Smith, David Eugene. History of Mathematics. Boston; New York: Ginn & company, 1923.

Comment posted by Bernd Schimansky on

Dividing by zero doesn’t result in infinity, but is simply undefined.

Comment posted by Keith Houston on

Hi Bernd — thanks for the catch! My maths isn’t what it used to be.

Comment posted by Saurabh on

Correction: Zero divided by zero is undefined. A positive number divided by zero is infinity. A negative number divided by zero is negative infinity.

Comment posted by Saurabh on

Correcting my correction, this is bollocks. Carry on.

Comment posted by Keith Houston on

Thanks for the honesty! Even so, your mention of positive and negative numbers reminded me to amend the text to include them.

Comment posted by Alan on

It’s not entirely wrong. See the Wikipedia article on “division by zero” for examples of systems in which division by zero is defined. For most practical purposes, however, division by zero is indeed undefined.

Comment posted by Marmot on

Anything divided by nothing is everything. No, not useful in practice, but conceptually it’s quite important—undefined seems poor design. Mathematical notation is like a chair, we can make it ergonomic or uncomfortable.

Comment posted by Anentropic on

But everything is still not enough, surely?

Comment posted by Stephan on

It’s undefined because every definition they considered resulted in the ability to write mathematical proofs with results analogous to 1 = 2.

It may be uncomfortable, but, like quantum theory, it’s our fault for having trouble accepting the nature of reality.

Comment posted by Jarin on

Actually, something divided by nothing is NOT everything, and here’s why:

6 divided by 2 is 3, because 3 multiplied by 2 is 6.

What would you multiply 0 by to get 6? 0 multiplied by infinity is still 0.

Comment posted by Neil on

If that were the case then nothing would exist. We agree a line is made of consecutive dots. A dot is zero by zero in size. So it takes an infinite number of dots to make any length line. This tells us that zero multiplied by different infinities gives you your lengths. eg 0 x ∞(6) = 6, 0 x ∞(9) = 9. I know there is no nomenclature for this but that is the actuality. The ‘real’ number system that we use does not fully equal reality.

Comment posted by Stephan on

Not quite. That doesn’t work because infinity is not a number. (Because anything times infinity is equal to infinity. If you could multiply by infinity or use it to cancel terms, you could prove that 1 = 2)

You can’t multiply infinity by zero because it’s an algebraic “irresistible force meets immovable object” situation.

Comment posted by Neil on

Actually it is correct in the real world. That’s why I differentiated between the man made ‘real’ number system and the real world. The ‘real’ number system does not have infinity but it does not mean that it doesn’t exist. The problem with the 1=2 proof is that as soon as it starts multiplying by zero (a-b) where a = b then this allows (a+b) to equal b by the 4th line. The only instance where this can occur is when a and b are both 0. Any other numbers (eg. 3) make the 4th line errornous (3+3)/0 = 3/0. The proof relies on a and b being the same number and by the 4th line this can only be true when a and b = 0 in reality. 6/0 = ∞(6) and 3/0 = ∞(3) which are not equal. One infinity does not equal another. (It’s like ‘unreal’ numbers which are no more unreal than ‘real’ numbers except per our man made interpretation of them).

Comment posted by Phillip Susi on

No, infinitiy is not a number, be it real, imaginary, or irrational. Infinity only exists in limit theorum where it is used as a stand in for a “there is *no* number that bounds this limit”. You therefore can not perform simple algebra on it, such as division or multiplication. Compare this with other non real numbers, like the square root of negative one, or i, on which you *can* perform algebra.

Comment posted by Neil on

It’s not a number in the sense of representing a quantity. Infinity does however exist. There is no nomenclature for using in our algebra system. Doesn’t mean that there couldn’t be; as I’ve shown with my examples. If you choose to use alternatives you could do algebra with it. It is only rigid adherence that prevents it. Don’t ever mistake scientific and mathematical adherence with reality. Yes, you can do algebra with unreal numbers. The reason is that they are just as real as ‘real’ numbers. We mistake positive squares as somehow being different to negative squares. If you swap negative along one of the axis then the rotated quadrants then become the unreal ones. The mistake is that one length in one direction is somehow thought to be the same as the same length in a different direction. They are not. Multiplying 8m west x 8m north actually gives you 64mw.mn. It’s fine to represent it as 64m² but we should not forget the fact the the metres are not identical to each other. They point in different (perpendicular) directions. It’s important to remember this when dealing with unreal numbers so as to not see them as magical.

Comment posted by Francesco on

You say:

> by now the ‘p’ had vanished entirely

Actually, the ‘p’ has not vanished, it’s still there. The strange “gliph” before the “o/o” is the standard abbreviatura for “per” in those centuries:

See Cappelli, Dizionario di Abbreviature Latine ed Italiane, p. 257 (you’ll need to scroll to the right to see the most common form of “per”, which is exactly the one depicted in your image.

The image therefore should be read “guadagnare 22 [per] [cto]”: not only the “p” is still there but the whole “per” is there, even though it’s abbreviated

Comment posted by Keith Houston on

Hi Francesco — you’re right! Thanks for the comment. And thank you for reminding me about the “per” sign.

Comment posted by Jeremy Wickins on

Somewhere back when ah were nobbut a lad, one of my maths teachers alluded to this. He said that the bottom “o” was a mis-shapen “c”, but that the stroke was the “per”. I was never entirely happy with that because it didn’t explain the top “o”, but at least he was correct about the part of it! Which leads to another question – did the use of the stroke for “per” come from misunderstanding the stroke in per cent, or does it have another origin?

Comment posted by Keith Houston on

Hi Jeremy — thanks for the comment! Do you mean the use of ‘/’ in division operations? That’s a good question. I know that the obelus, or ‘÷’ comes from an ancient Greek editing symbol used to mark spurious text, but I don’t know when it was joined by ‘/’.

Comment posted by Thomas A. Fine on

I have to wonder if this is related to the “per” sign, which is very widely used in the 19th century and earlier, and yet has basically disappeared completely. It’s in unicode (⅌), but is so new in unicode that it isn’t supported on my computer, and I just get a blank there between the parenthesis.